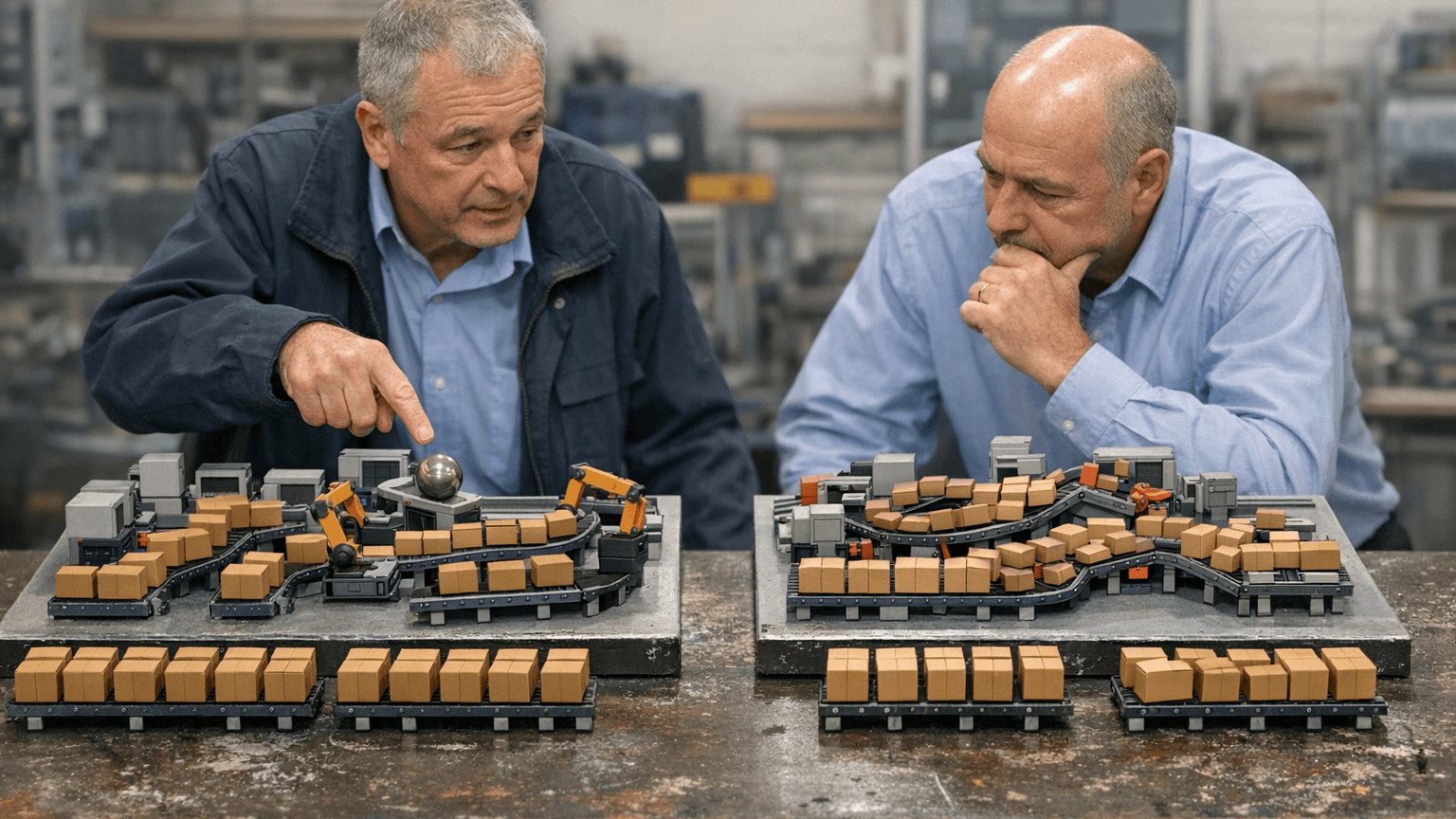

How to Compare Resilience, Not Just Throughput, in Factory Scenarios

Resilience dimensions beside throughput

Time to recover a named service target after a shock. Constraint migration under failure or absenteeism. Inventory and WIP exposure when inbound slips or quality spikes. Flexible staffing feasibility to cover variance without overtime collapse. Optionality: how fast you can re-route or rebalance with existing assets. Label dimensions with evidence grade when data is thin.

You are not trying to predict every disruption; you are forcing options to show their shape under plausible pressure. That shape is what operations will recognize when the spreadsheet is gone.

Resilience scorecard pattern

Use the same table for every option so comparisons stay fair: scenario story, throughput outcome, recovery time, constraint shift, risk note. Base case may rank options with recovery as tie-breaker. Supplier slip and station outage stories often elevate recovery and migration to primary reads.

Keep the language executive-plain: “Option A wins average hours; Option B recovers two shifts faster when Station 3 is down” is a decision, not a seminar.

Stress pack resilience checks

At least one disruption story is non-negotiable in the pack. Recovery is defined as a measurable target—not a feeling. Sensitivity covers absenteeism or skill mix when labor is a constraint. Finance sees how resilience metrics tie to working capital and service penalties.

If finance only sees throughput, capital choices will keep optimizing the wrong weather. Working capital and service exposure belong in the same frame as the layout sketch.

CAPEX and footprint consequences

Resilience differences change how much buffer you must fund, how rigid a line becomes under mix variance, and whether a cheaper layout buys you a brittle network. Those are CAPEX-adjacent costs even when they do not show up as steel and concrete on day one.

What should feel different on Monday

Teams rarely fail because they lack intelligence; they fail because the next meeting repeats the same questions with fresher anxiety. When simulation work is wired into how you decide, Monday shows up with fewer circular arguments about whether a layout "ought to work." Instead, you carry a short list: which option survived the same stress vocabulary, which assumptions still carry hypothesis labels, and what would force you to rerun the pack before the next tranche. That is the practical face of governance—not a heavier process, but a clearer receipt for why the floor should trust the plan.

For capital and footprint choices, the receipt matters as much as the ranking. Approvals should be able to point to scenario identity and ranges without opening a model. If executives cannot explain the downside story in plain language, the organization is still buying animation. If operations cannot recognize the staffing and flow assumptions embedded in the memo, the twin is still a slide, not a decision system. Use the next leadership block to test whether the narrative is portable: could someone not in the room defend the choice from the packet alone? If not, tighten the assumption ledger and the executive summary before you ask for more money or more floor space.

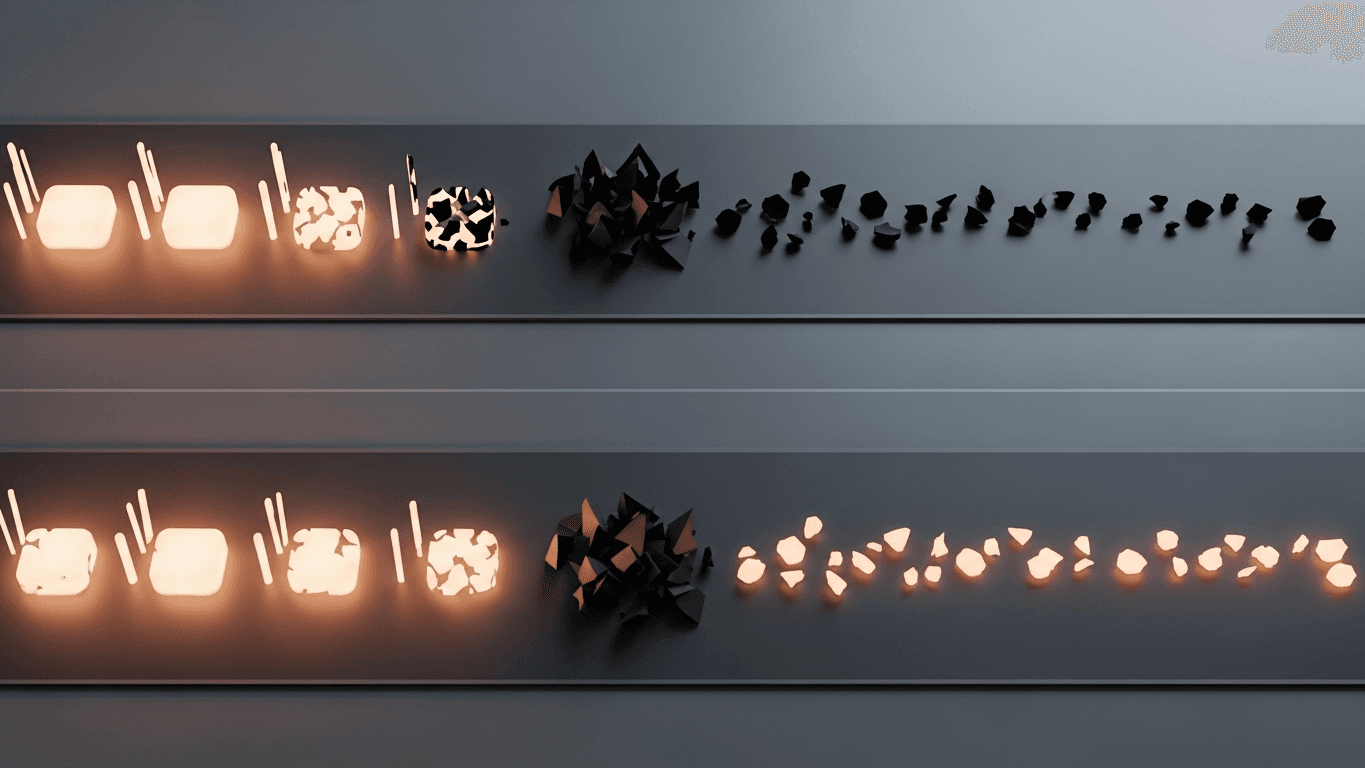

What DBR77 Digital Twin adds

DBR77 Digital Twin keeps disruption and recovery stories inside the same comparison frame as headline throughput so fragile options cannot hide behind averages.

Bottom line

If every option wins on average and loses differently under stress, average is the wrong judge. Compare bad weeks on purpose.

DBR77 Digital Twin helps teams keep disruption stories inside the same comparison workflow as throughput scenarios. Book a demo or Explore Digital Twin.

Want to see Digital Twin on your scenario?

Book a short demo — we'll show the fastest path to decision-grade outcomes.