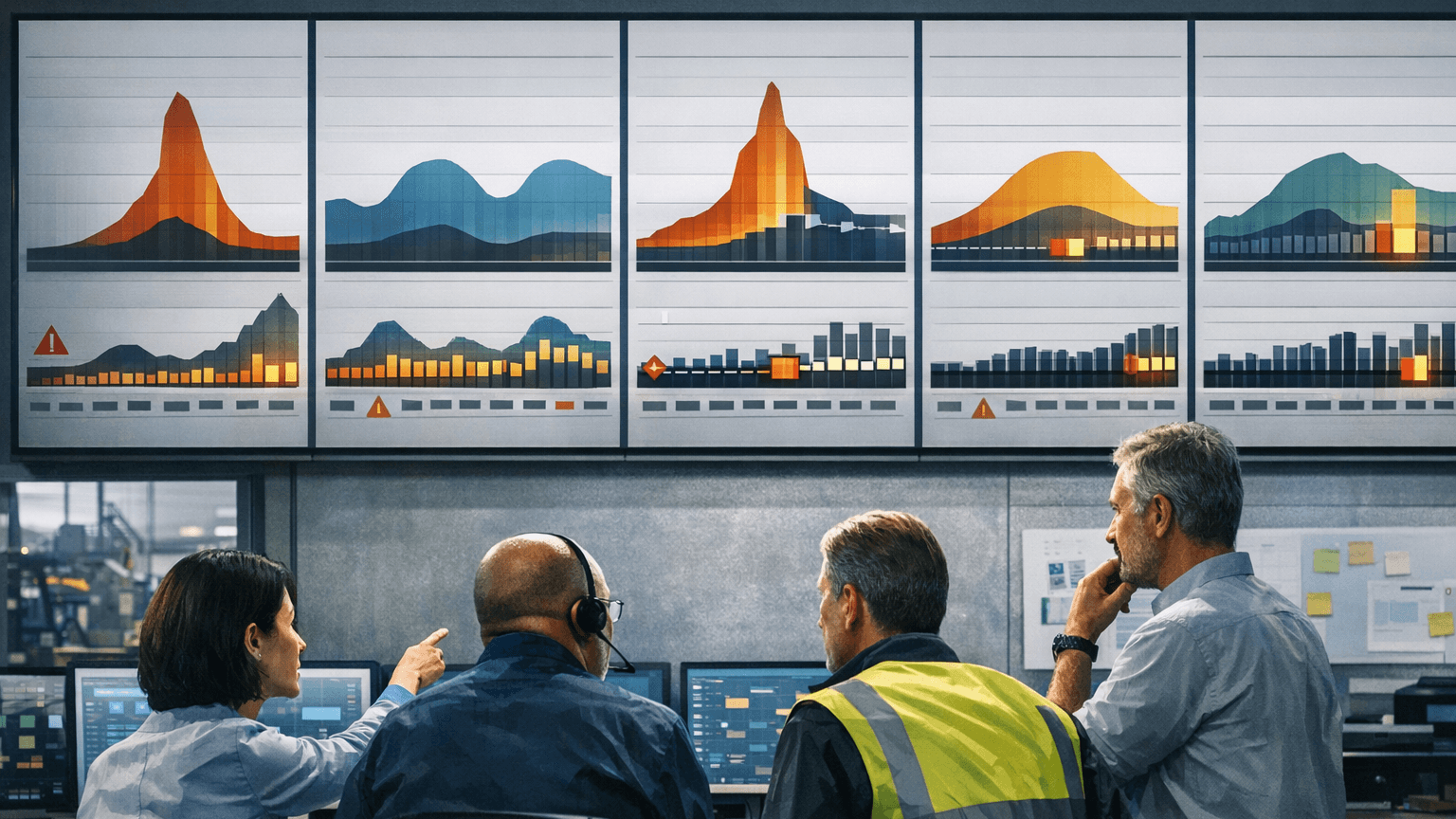

How to Test Capacity Decisions Before the Next Demand Shift

Frame the decision as a comparison

Write the decision sentence before any modeling detail. Examples: overtime-first versus incremental headcount versus targeted bottleneck investment for the next eighteen months; defer line B expansion until line A stabilizes under a new product family; choose between two shift patterns under a stated uplift scenario. If you cannot compare alternatives, you do not have a decision yet—you have a mood.

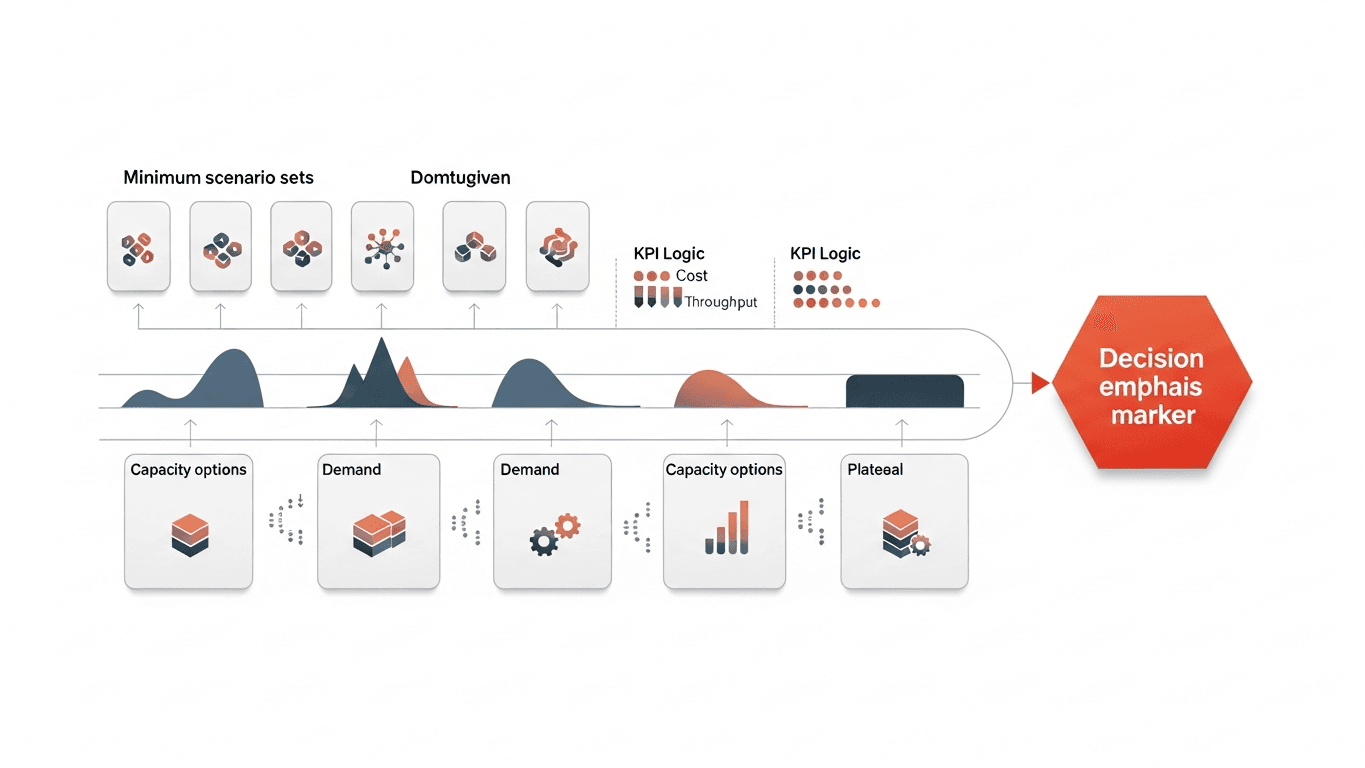

Minimum scenario set

Run level shift (uniform uplift or decline near the base case), mix shift (volume stable but family distribution changes enough to alter cycles and changeovers), spike week (short high-load window with realistic recovery), and ramp curve (month-by-month growth with honest hiring and training lag). You are not predicting which story will happen; you are learning which plan breaks first.

KPIs that keep comparisons honest

Track throughput and backlog risk at the bottleneck, WIP and queue time at the top constraint candidates, overtime and temporary labor exposure, an on-time risk proxy tied to release and shipping rules, and whether the bottleneck stays put or migrates between scenarios. If the bottleneck moves, treat it as signal—not as a modeling glitch.

From question to defendable comparison

Lock the decision sentence and real alternatives. Define baseline using recent weeks that include pain, not only smooth weeks. Encode constraints that matter: staffing rules, tool sharing, material release, transport loops. Run the scenario set with the same randomness or trace-replay policy across alternatives. Compare trade-offs in plain language—cost, risk, flexibility, time to implement—and record assumptions that would invalidate the conclusion if wrong.

When this approach fails

It fails when teams refuse to name constraints, when leadership changes the question weekly, when the model is tuned to reproduce the slide instead of stress the plan, or when a polished dashboard substitutes for a decision record.

Governance that fits real factory tempo

Good governance matches the plant’s clock. Monthly operations reviews should treat forward risk as a first-class citizen, not as an appendix when slides run long. Capital forums should treat scenario IDs and assumption grades as part of the approval artifact, not as a modeler’s footnote. Post-investment reviews should be able to find the baseline story that was funded and test whether reality diverged in ways that change the next tranche.

When ownership is clear—who maintains structure, who certifies floor truth, who signs scenario packs—refresh events stop being personal favors and become predictable maintenance. That is how digital twin survives turnover: the next steward inherits templates, packs, and ledgers instead of inheriting lore. If your program cannot survive a leadership change, it is still a project, not infrastructure.

What DBR77 Digital Twin adds

DBR77 Digital Twin supports practical scenario comparison with a path from manual inputs toward richer integration. For capacity decisions that means disciplined side-by-side evaluation of staffing, shift, and investment options; variability-aware testing instead of single-point capacity math; clearer communication with finance and sales about risk rather than false precision.

Bottom line

Test capacity decisions by comparing real alternatives under multiple demand shapes and by watching whether constraints migrate. If you only trust averages, the next demand shift will teach the same lesson—with higher urgency and less room to recover gracefully.

DBR77 Digital Twin helps planning and operations teams compare capacity options under multiple demand shapes before the next shift exposes weak assumptions. Book a demo or Browse use cases.

Want to see Digital Twin on your scenario?

Book a short demo — we'll show the fastest path to decision-grade outcomes.