What a Good Simulation Input Set Looks Like Before Live Integration

The minimum decision-grade stack

Define a bounded system map—what is in, what is intentionally out—so silent omissions cannot hide. Encode time-based process logic: sequences, routings, join points, rework paths when they matter to the decision. At key constraints, capture median processing time and spread justified by data or controlled assumption; include micro-stops when they change effective capacity. Average-only inputs are a common source of false confidence.

If mix matters, include family definitions operators recognize, changeover rules tied to realistic sequences, and scheduling policies planners actually run. Add material release and logistics rules that create waiting even when stations look available. Reflect staffing and shift mechanics as enforceable coverage, not theoretical capacity. Keep demand shapes, supply delay patterns, and shock events in a controlled layer you can edit without rebuilding the whole model.

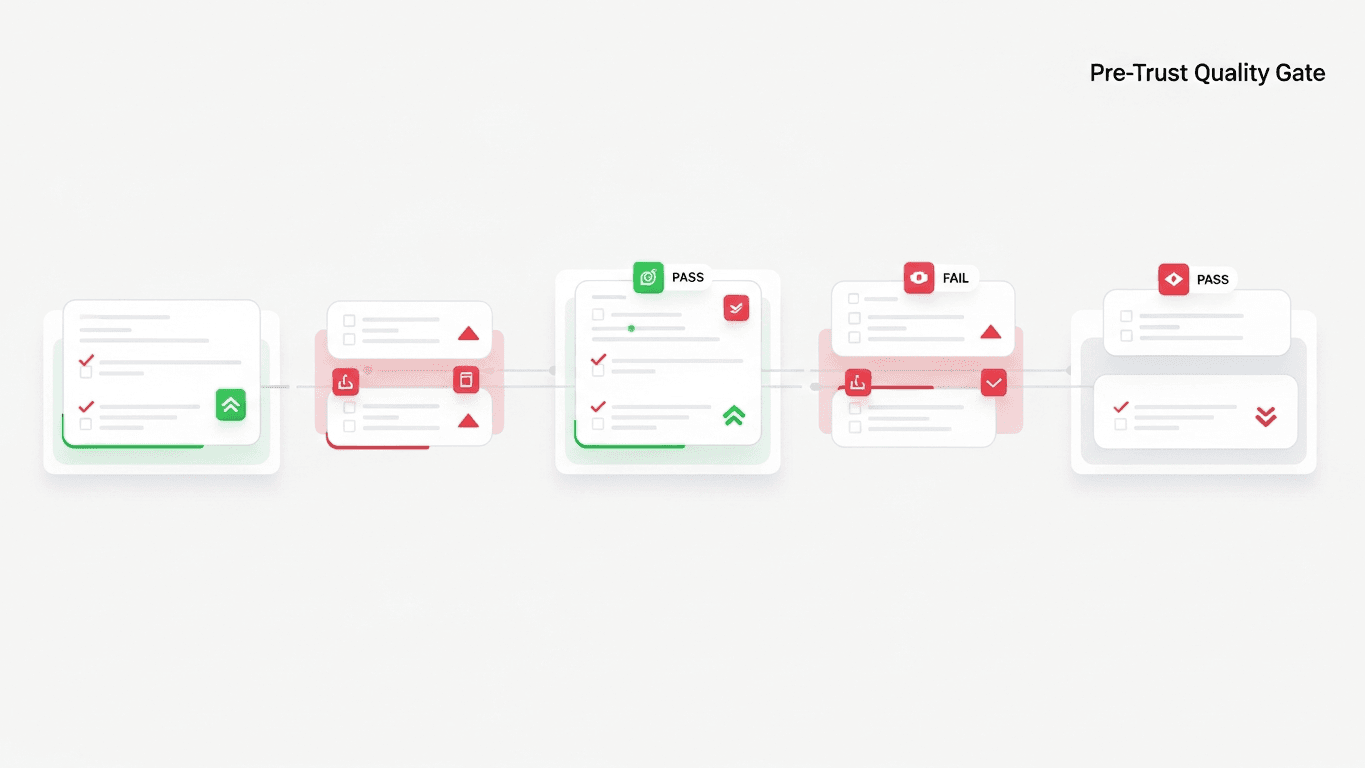

Quality checks before you trust outputs

The as-is model should reproduce a known bad week qualitatively. Bottleneck ranking in baseline should match shop-floor intuition. Changing one key assumption should move results in a direction the team can explain. Two independent reviewers should trace inputs to sources or assumptions. The decision sentence should survive the first modeling sprint without morphing. If the model cannot pass the bad-week test, fix inputs before debating scenarios.

What live integration adds—and does not

Live integration adds faster refresh, less manual transcription, and tighter alignment to short-horizon operations. It does not automatically clarify which decision is being tested, protect against wrong scope, or create executive alignment without explicit assumptions.

What should feel different on Monday

Teams rarely fail because they lack intelligence; they fail because the next meeting repeats the same questions with fresher anxiety. When simulation work is wired into how you decide, Monday shows up with fewer circular arguments about whether a layout "ought to work." Instead, you carry a short list: which option survived the same stress vocabulary, which assumptions still carry hypothesis labels, and what would force you to rerun the pack before the next tranche. That is the practical face of governance—not a heavier process, but a clearer receipt for why the floor should trust the plan.

For capital and footprint choices, the receipt matters as much as the ranking. Approvals should be able to point to scenario identity and ranges without opening a model. If executives cannot explain the downside story in plain language, the organization is still buying animation. If operations cannot recognize the staffing and flow assumptions embedded in the memo, the twin is still a slide, not a decision system. Use the next leadership block to test whether the narrative is portable: could someone not in the room defend the choice from the packet alone? If not, tighten the assumption ledger and the executive summary before you ask for more money or more floor space.

What DBR77 Digital Twin adds

DBR77 Digital Twin keeps early models honest: the manual-to-integration path stays disciplined so pre-feed comparisons remain defensible and teams can prove value before committing to full live complexity.

Bottom line

A good simulation input set before live integration is bounded, time-accurate, variability-aware, and assumption-traceable. If you cannot name your key assumptions, you do not have a model problem—you have a governance problem wearing a technical mask.

DBR77 Digital Twin is built to start with disciplined manual inputs and grow into richer integration without blocking early scenario value. Book a demo or Explore Digital Twin.

Want to see Digital Twin on your scenario?

Book a short demo — we'll show the fastest path to decision-grade outcomes.